You Don't Need Better AI. You Need Better Accountability.

For the past three posts I've been asking hard questions.

Who can prove AI value? Where are you actually starting from? Who owns the output?

Today I want to give you something more useful than a question.

Here's where I would start with any one who's ready to fix the accountability problem:

The 3-Part Fix:

🔴 → 🟢 doesn't happen with better tools.

It happens with three unglamorous decisions:

1. Name the owner — by function.

Not one AI czar for the whole organization. Every function that uses AI to inform decisions needs a named, documented quality owner. Media. Analytics. Strategy. Creative. One person and one accountability for each.

2. Define "good" before you run the workflow.

If success criteria don't exist before AI executes — no one can meaningfully review what comes out. This is a standards problem disguised as a technology problem.

3. Build a QA gate — lightweight but non-negotiable.

One reviewer. Documented criteria. One sign-off before AI output influences a real decision. Not a committee. Not bureaucracy. A checkpoint.

Here's the reframe that changes everything:

Most organizations treat AI output like Google search results.

Probably right. Act on it.

The organizations that will win treat AI output like an agency recommendation.

Valuable input. Still requires human judgment before it moves.

The uncomfortable reality:

You don't need better AI.

You need better accountability structures around the AI you already have.

Which of the three from above feels most urgent for your organization right now?

The Question That Silences Rooms

Ask this question and there’s a good chance the room will get quiet.

"Who in your organization is ultimately responsible for the quality and accuracy of AI-generated outputs?"

Not who uses AI. Not who bought the tools.

Who actually owns the output and is assuring accuracy?

Quick self-score:

🔴 No one. Whoever runs the workflow takes informal responsibility — if anyone does.

🟡 Team leads review outputs loosely but there are no defined standards or accountability.

🟠 There's a general sense of ownership but it isn't documented or consistently enforced.

🟢 A defined quality owner exists with documented criteria applied before any output drives a decision.

Here's the uncomfortable truth:

Most organizations are 🔴 red or 🟡 yellow.

Which means AI-generated insights are informing real decisions — budget allocations, campaign strategies, audience targeting — with no one formally accountable for whether they're right.

That's not an AI problem. That's a leadership problem.

The organizations that will win in an AI-first market aren't necessarily the ones with the best tools.

They're the ones who know who's responsible when the tools get it wrong.

You Can't Close a Gap You Can't See

Be honest with yourself for a moment.

When you look across your organization today — how would you describe your relationship with AI?

Not your ambition. Not your roadmap.

Right now. Today!

Quick self-score:

🔴 Mostly individual experimentation — ChatGPT, Copilot, whatever someone found on their own. No organizational approach.

🟡 AI exists in specific pockets — but inconsistent across teams with no shared standards.

🟠 Actively used across multiple functions with some governance starting to emerge.

🟢 Deeply embedded — consistently tied to measurable business outcomes across the organization.

Here's what I've observed;

Most think they're 🟠orange.

Most are actually 🟡yellow.

Few are honest enough — or lack enough visibility — to know the difference.

That gap between perceived maturity and actual maturity? You can't close a gap you can't see.

Can You Prove It?

The AI Readiness Reality Check:

Most organizations are running AI experiments.

Very few are running AI-native businesses.

Here's the question that separates them:

"Can you point to a specific business outcome — revenue, performance, cost, speed — directly attributable to AI?"

Not "we're using it." Not "the team loves it."

Proof.

Quick self-score. Pick your honest answer:

🔴 "We can't quantify it — AI is happening but no one's tracking outcomes."

🟡 "We sense it's contributing but haven't connected it to real metrics."

🟠 "We have data points in specific areas but nothing systematic."

🟢 "We actively track AI's contribution against defined KPIs every quarter."

Most organizations will likely fall somewhere between 🔴red and 🟡yellow.

Not because they lack smart people.

Because no one defined success before AI got deployed. No one owns the measurement. AI was brought in to impress — not to activate.

That's the gap. And it's fixable.

I Built a RAG Agent Over the Weekend

My mind is officially blown. Out of boredom, I built a RAG agent over this past weekend. What hit me hardest (even by my own benchmarks) is how quickly this tech is forcing every serious team to re-architect on the fly.

What started as a simple data processing idea turned into a full end-to-end pipeline: drop an Excel template → auto-chunk/embed into Supabase vector store → instant agentic analysis and classification → export clean CSV output to Google Drive → plus an always-on chat agent with memory and citations.

The real jaw-dropper? n8n's built-in AI-assisted builder and agent nodes let you visually orchestrate complex logic like it's Lego. The AI built it all by itself - just by me prompting!

Supabase's pgvector integration makes self-hosted, production-grade vector search feel effortless (no separate Pinecone/Weaviate needed). Again, the built-in AI built the vector stores and chat memory needed for my project.

The killer feature: export any workflow as clean JSON → paste it into Grok, Claude, GPT, or Gemini → describe tweaks or entirely new use cases → get back updated/brand-new import-ready JSON in seconds.

Iterating went from hours of node-dragging to minutes of natural-language prompting. Allowed me to accelerate the the whole process: describe → generate → import → test → refine.

Gang - this isn't just tooling - it's a glimpse of the foundational shift AI is forcing on every organization. The capabilities are arriving faster than most teams can restructure around them. Companies that treat automation + agents as a core competency (not an IT side project) will pull ahead dramatically. The rest risk being left behind or playing a game of catch up.

If you're experimenting with AI workflows, RAG agents, or no-code/low-code orchestration - what's blowing your mind right now? Drop a comment—I'd love to hear your wins, war stories, or next experiments.

(Pro tip: If you're on n8n, try exporting a workflow and feeding it to your favorite frontier model. The loop is addictive.)

#AI #Automation #RAG #n8n #Supabase #AgenticAI #NoCode #FutureOfWork #LLM

Building on Sand

AI hype is deafening in 2026… but most results stay silent.

Everyone’s rushing AI pilots, agents, and “smart” dashboards—yet the majority quietly flop.

The hard truth: AI fails without rock-solid data integration + ontology.

It’s not table stakes; it’s the entire game.

Read on for why foundations beat fancy LLMs every time—and what to build first 👇

The AI hype is loud… but the foundation is silent.

Everyone’s racing to launch AI pilots, copilots, agents, dashboards that “talk.” Yet most initiatives quietly under-deliver.

Why? Because we keep building on sand.

The hard truth: AI’s promise collapses without rock-solid data integration and ontology.

What actually matters first — the real priority:

Connecting disparate data sources

Cleaning and normalizing the mess (with real data checks and cross-checks)

Building the semantic layers (ontologies) that define:

• Business rules and context

• Relationships between entities

• A shared glossary and taxonomy

• True meaning — how the business actually operates

It’s not glamorous. It’s not flashy.

It’s the invisible plumbing that turns chaos into clarity.

But once that foundation is in place — once your data is connected, trusted, and semantically rich — everything changes.

You can finally layer on, with confidence:

• Real-time analytics

• Executive dashboards that actually mean something

• Machine learning models that learn the right things

• Generative AI that delivers trustworthy, contextual answers

No more garbage-in, gospel-out.

No more “the AI said…” followed by boardroom eye-rolls.

The winners in the next decade won’t be the ones with the fanciest LLMs. They’ll be the ones that quietly nailed the data foundation years earlier.

Data integration and ontology aren’t “table stakes.”

They’re the entire game.

If you’re leading digital or AI transformation, ask yourself:

Are we still skipping the foundation… or finally building it right?

Finally. A Map for the AI Chaos.

AI buzzwords blur fast: prompts, RAG, agents, guardrails, embeddings... total overload.

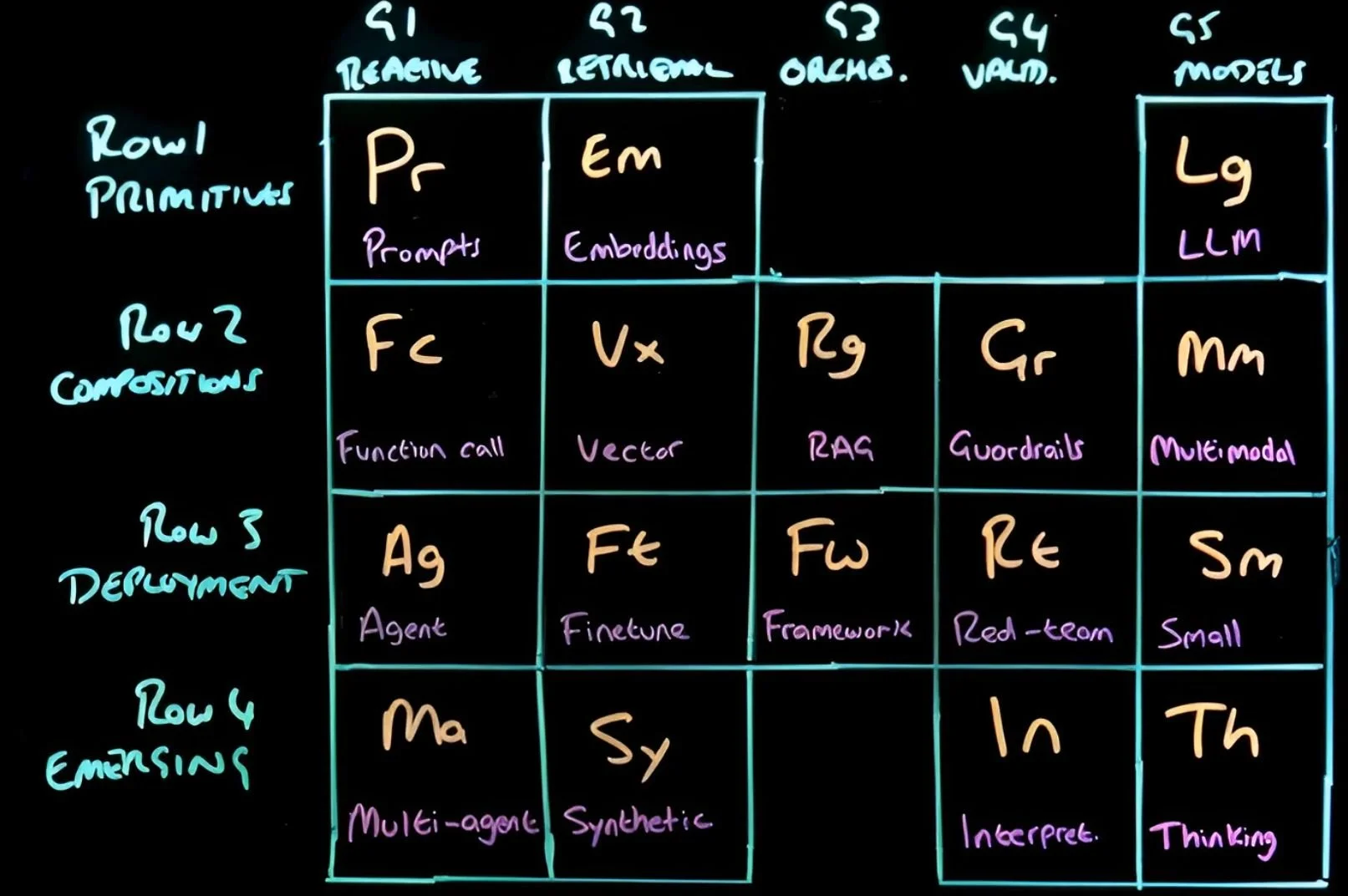

I recently watched Martin Keen's IBM video on the "AI Periodic Table" (link below). It's offers a brilliant mental model that organizes these concepts like the chemical periodic table.

Rows build from basics to advanced (Primitives, Compositions, Deployment, and Emerging) and the columns group by family (Reactive, Retrieval, Orchestration, Validation, Models).

The magic? It lets you decompose any AI app or project into its core "elements." After watching the video, I encourage you to map out what you're building (or evaluating), spot gaps (missing guardrails?), uncover smart combos, and predict how components interact - like chemical reactions. Have fun with it!

I've used it personally to break down my own projects: drop components onto the grid, identify missing pieces, and clarify the architecture fast. It turns hype into something structured and actionable.

Watch the video here: https://www.youtube.com/watch?v=ESBMgZHzfG0

What's one AI project or use case are you tackling right now? Try mapping it to the table - what elements are in play, and what's missing?